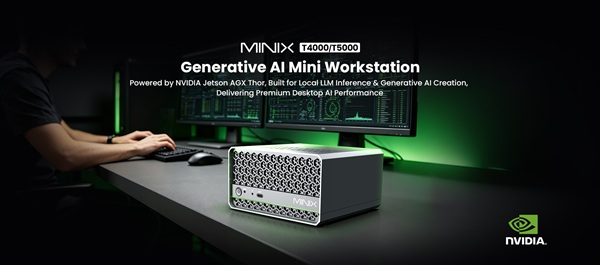

MINIX has launched its latest generative AI mini workstations, the T4000 and T5000, designed to deliver high-performance artificial intelligence capabilities in a compact, desktop-friendly form factor.

Built on the NVIDIA Jetson AGX Thor modules and powered by the next-generation Blackwell architecture, the new systems bring enterprise-grade AI processing to edge and on-premise environments. The T4000 offers up to 1200 Sparse FP4 TFLOPs of AI performance, while the T5000 scales up to 2070 TFLOPs—enabling efficient local inference for large language models (LLMs) ranging from 7B to 70B parameters.

The mini workstations are engineered for developers, enterprises, and content creators seeking private, low-latency AI computing without relying on cloud infrastructure. By supporting on-device processing, the systems address growing concerns around data privacy, security, and latency in AI deployments.

Both models feature high-core-count Arm-based CPUs—12-core for the T4000 and 14-core for the T5000—paired with up to 128GB of LPDDR5X high-bandwidth memory. This configuration allows for smooth handling of multi-modal AI workloads, concurrent processes, and lightweight model training tasks such as LoRA fine-tuning and dataset processing.

In terms of connectivity, the devices are equipped with dual 10GbE Ethernet ports, Wi-Fi 6E, and Bluetooth 5.3, ensuring fast and reliable data transfer for enterprise workflows. The systems also include multiple USB ports, dual HDMI 2.1 outputs for 4K displays, and expandable storage starting at 1TB PCIe 4.0 NVMe SSD.

Despite their powerful specifications, the T4000 and T5000 maintain a compact footprint—measuring just 139.3 × 131 × 76.8 mm—and do not require traditional rack-mounted infrastructure. Advanced cooling systems and durable chassis design enable sustained high-performance operation in demanding environments.

The workstations come pre-installed with Ubuntu 24.04 LTS and are fully compatible with NVIDIA’s AI software ecosystem, including CUDA, TensorRT, and containerized AI workflows. This ensures seamless integration into existing enterprise and developer pipelines.

Positioned for a wide range of use cases—from local AI chat and document processing to generative content creation and private enterprise AI hubs—the new MINIX systems reflect a growing shift toward edge AI and decentralized computing.

As organizations increasingly prioritize secure, on-premise AI solutions, compact yet powerful systems like the T4000 and T5000 are expected to play a key role in enabling scalable, real-time AI applications without the need for large-scale data center infrastructure.