Advanced Micro Devices (AMD) is positioning its latest accelerator hardware, the AMD Instinct MI350P PCIe cards, as a third path for enterprise AI adoption—offering high-performance artificial intelligence computing that fits into existing data center infrastructure without requiring full system overhauls or cloud migration.

The announcement reflects a growing industry shift as organizations struggle with the rising costs, privacy concerns, and infrastructure limitations of large-scale AI deployments in cloud environments.

Drop-in AI acceleration for existing data centers

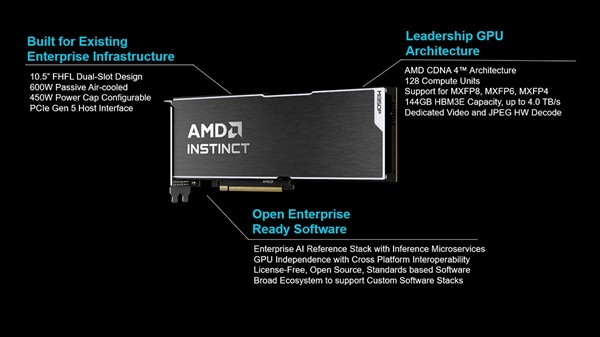

Unlike traditional GPU clusters that require dedicated high-power infrastructure, the AMD Instinct MI350P PCIe cards are designed as dual-slot, air-cooled accelerators that can be installed in standard enterprise servers.

This allows companies to deploy AI inference workloads—such as retrieval-augmented generation (RAG) pipelines and model serving—within existing racks, cooling systems, and power budgets.

AMD says the design enables enterprises to scale AI without rebuilding their data centers, offering a practical alternative between cloud AI services and expensive GPU cluster upgrades.

High-performance AI computing for enterprise workloads

The MI350P PCIe architecture delivers up to 2,299 TFLOPS of estimated performance, scaling to 4,600 TFLOPS in MXFP4 precision, positioning it among the highest-performing enterprise PCIe AI accelerators currently available.

Key hardware features include:

- 144GB HBM3E memory with up to 4TB/s bandwidth

- Support for MXFP4, MXFP6, FP8, BF16, and INT8 precision formats

- Built-in sparsity acceleration for improved efficiency

- Optimized throughput for AI inference workloads

These capabilities are designed to support small to large AI models while reducing power consumption and cooling requirements in traditional enterprise environments.

Open ecosystem strategy for enterprise AI

AMD is also emphasizing an open software ecosystem strategy, enabling integration with widely used AI tools and frameworks such as PyTorch and Kubernetes-based GPU management systems.

The company’s AI software stack includes:

- Kubernetes GPU Operator for lifecycle management

- AMD Inference Microservices for cloud-native deployment

- Open-source enterprise AI reference stack (no licensing fees)

This approach allows enterprises to migrate AI workloads with minimal code changes while avoiding vendor lock-in and per-token AI service costs associated with cloud-based models.

AI infrastructure shift: on-prem vs cloud vs hybrid

AMD’s positioning highlights an evolving enterprise debate: whether to run AI workloads in the cloud, on-premises infrastructure, or hybrid environments.

While cloud platforms offer scalability, they often introduce unpredictable costs and data privacy concerns. On-prem GPU platforms, meanwhile, have traditionally required expensive infrastructure redesigns.

The MI350P PCIe solution aims to bridge this gap by enabling high-performance AI deployment within existing data center constraints, giving enterprises more flexibility in how they scale AI adoption.